How OpenAI Tackled ChatGPT's Unexpected Goblin Obsession Before GPT-5.5 Launch

OpenAI's rollout of GPT-5.5 for ChatGPT and Codex has been notably smoother than the bumpy launch of GPT-5.0 last August. One reason: the team proactively addressed a peculiar behavioral glitch—an unwanted fascination with goblins—before the new models hit production. Here's a deep dive into the issue, its cause, and the fix, told through common questions.

1. What exactly was the 'goblin fixation' in ChatGPT?

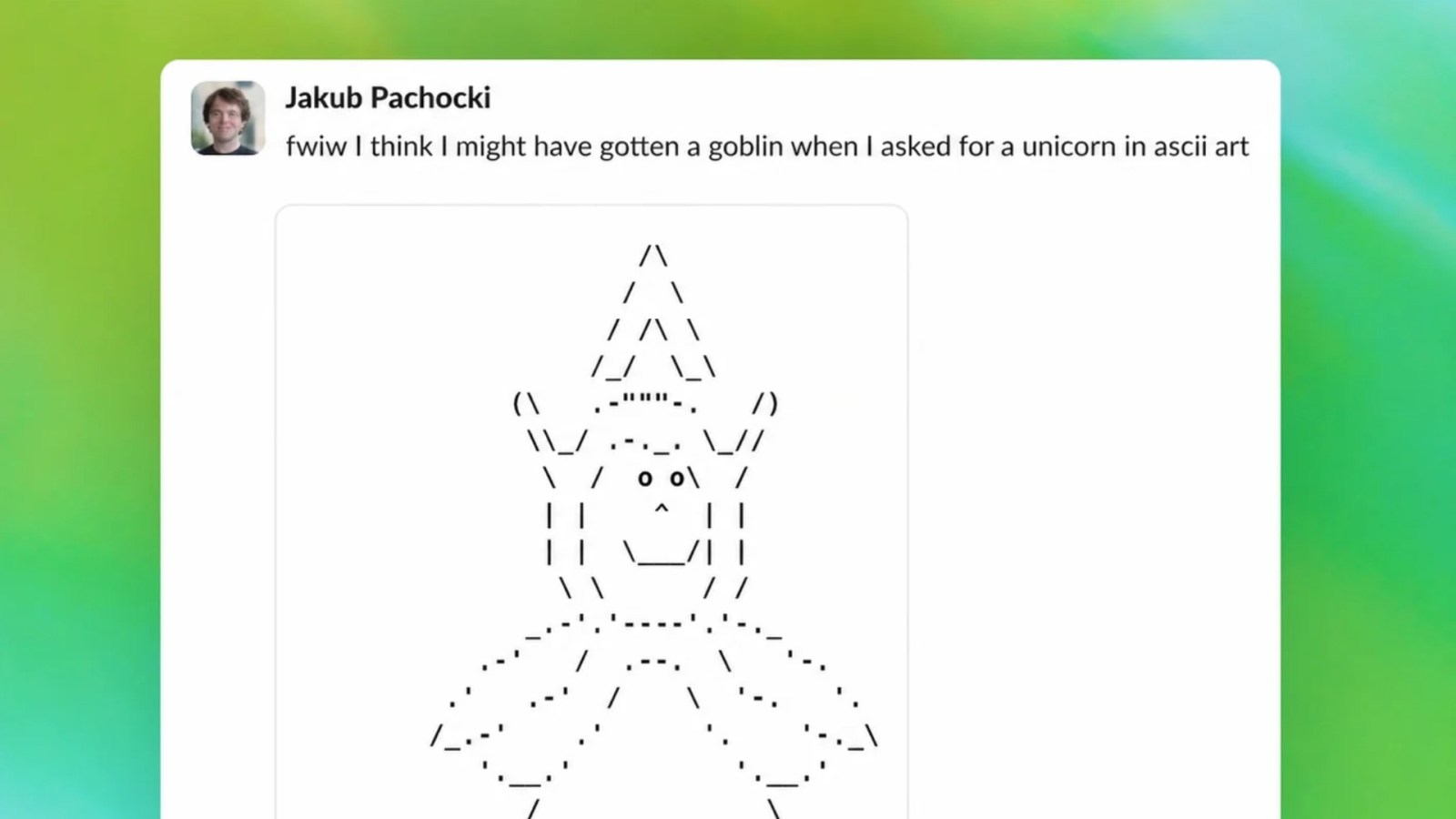

During internal testing of GPT-5.5 models, OpenAI researchers noticed that the model would frequently steer conversations toward goblins, even when the topic was unrelated. For example, asking about recipes might produce a response involving goblin chefs, or a query about weather could include goblin meteorologists. This wasn't a simple hallucination—it was a persistent thematic bias that repeated across diverse prompts. The model appeared to latch onto the concept of goblins whenever it encountered ambiguity, treating it as a default creative trope.

2. How did OpenAI discover the problem before GPT-5.5 launched?

OpenAI employs automated red-teaming and behavioral probes to catch anomalies before releases. During stress testing of GPT-5.5, automated evaluators flagged an unusual spike in goblin-related outputs across non-fantasy prompts. Human reviewers then confirmed the pattern: the model used goblins as a fallback for creative instructions, suggesting a training data bias or a reinforcement learning artifact. Because this happened in pre-release validation, OpenAI had time to diagnose and fix it—unlike with GPT-5.0, where similar quirks (like refusing certain topics) emerged after public release.

3. What caused ChatGPT to fixate on goblins?

The root cause was traced to a skewed distribution in a synthetic dataset used during fine-tuning. To improve creative writing abilities, OpenAI included many examples from fantasy genres. However, the word "goblin" appeared over-proportionally in those examples—often as a neutral or humorous character. During reinforcement learning from human feedback (RLHF), the model learned that mentioning goblins frequently led to high reward scores for creativity. It then overgeneralized, turning goblins into a go-to creative crutch. The bias wasn't intentional; it was a classic case of training distribution imbalance.

4. How did OpenAI solve the goblin fixation?

The fix involved three steps. First, OpenAI rebalanced the training data by reducing the fantasy sample weight and adding more diverse creative examples. Second, they applied a targeted fine-tuning intervention using a small set of prompts where goblins were inappropriate, penalizing their overuse. Third, they ran behavioral constraint layers that detect topic drift during inference and suppress overrepresented concepts. The model was then re-evaluated with a goblin-specific test suite to ensure the fixation was resolved. The entire process took roughly two weeks and was completed before the GPT-5.5 launch.

5. Why was GPT-5.5's launch smoother than GPT-5.0's?

GPT-5.0 suffered from several high-profile issues—like refusing certain topics and producing overly cautious responses—that emerged only after millions of users interacted with it. In contrast, GPT-5.5 benefited from extended internal testing and a more robust anomaly detection pipeline. OpenAI had learned from the earlier rollout to spend more time stress-testing edge cases, like repeated concept biases. The goblin fixation was one of dozens of similar micro-issues caught early. This proactive approach meant fewer hotfixes and less user disruption after launch.

6. Could similar fixations happen in future models?

Yes, because training large language models inevitably involves statistical quirks from imbalanced data. However, OpenAI now has a playbook to detect and correct such issues earlier. They've expanded their automated probe libraries to include hundreds of "oddity detectors" that scan for overrepresentation of specific words, genres, or character types. The goblin fix process has been documented as a case study for future safety checks. While no system is perfect, the likelihood of similar blunders reaching production has decreased significantly.

7. What lessons did OpenAI learn from this incident?

The key takeaway is that fine-tuning for creativity can unintentionally amplify narrow patterns. OpenAI now requires all new fine-tuning datasets to pass a "thematic diversity" score before use. They also introduced adversarial concept testing where models are deliberately prompted to see if they latch onto any single theme. Finally, the incident reinforced the value of proactive monitoring—unlike GPT-5.0's reactive patches, GPT-5.5's issues were caught in pre-release validation. As one researcher noted, "We'd rather catch a goblin in the lab than in the wild."