Quick Facts

- Category: Technology

- Published: 2026-05-03 08:26:58

- Breaking: In-Utero Surgery Performed on Fetus, AI Agent Wipes Database in 9 Seconds, Universe's End Predicted Sooner

- Adidas Evo 3 Shatters Marathon Records, Sparks Supershoe Revolution

- 10 Key Insights into Cigna’s ACA Individual Market Exit and What It Means for Patients

- German Police Unmask Leader of Notorious Ransomware Gangs REvil and GandCrab

- The Complete Skywatcher's Guide to the Strawberry Moon of June 2026

Introduction: A Practical Leap in AI-Assisted Development

Mistral AI, a prominent player in the open-source AI landscape, has announced significant updates to its developer ecosystem. The company is now offering remote agents in its coding agent platform, Mistral Vibe, alongside the public preview of a new dense language model, Mistral Medium 3.5. These developments aim to streamline software development workflows by allowing developers to offload complex coding tasks to the cloud and benefit from a more capable underlying model. This article explores what these changes mean for developers and how they fit into Mistral’s broader strategy.

What Is Mistral Vibe?

Mistral Vibe is a command-line interface (CLI) tool that transforms an AI model into a coding assistant capable of performing a wide range of software engineering tasks. From writing and refactoring code to generating test suites, investigating CI failures, and managing pull requests, Vibe acts like a tireless junior developer that works across your entire codebase. Initially, all Vibe sessions ran locally, tying the agent to the developer’s machine and requiring constant supervision. The latest update changes this fundamental limitation.

Remote Agents: Coding Without Being Tethered

How Cloud-Based Sessions Work

With the introduction of remote agents, developers can now start a coding session in the cloud and step away while the AI works through long tasks. Multiple sessions can run in parallel, eliminating the bottleneck of waiting for each step to complete before issuing new instructions. You can launch a remote agent directly from the Mistral Vibe CLI or from Le Chat, Mistral’s consumer-facing assistant. While the agent runs, you can inspect its progress through file diffs, tool calls, and status updates, and even approve or redirect actions as needed.

Seamless Session Migration

One particularly useful feature is the ability to “teleport” an ongoing local CLI session to the cloud. This migration preserves the entire session history, task state, and any approvals, so you don’t lose your place. This means you can start a task on your machine, then transfer it to the cloud when you need to close your laptop or switch contexts.

Isolation and Integration

Each remote session runs in an isolated sandbox, ensuring that even broad code edits and package installations don’t interfere with other work or your local environment. When the agent finishes, it can automatically open a pull request on GitHub and notify you via Slack, Teams, or other integrated tools. Behind the scenes, Mistral uses its Workflows orchestrated in Mistral Studio to bring Vibe’s capabilities into Le Chat. This orchestration layer was originally built for Mistral’s own internal use and later offered to enterprise customers; now it’s available to everyone. Developers studying agentic systems will find this architecture instructive.

Vibe integrates with popular development tools: GitHub for code and pull requests, Linear and Jira for issue tracking, Sentry for incident monitoring, and communication platforms like Slack and Microsoft Teams for reporting results.

Mistral Medium 3.5: The Model Powering Everything

Specs and Performance

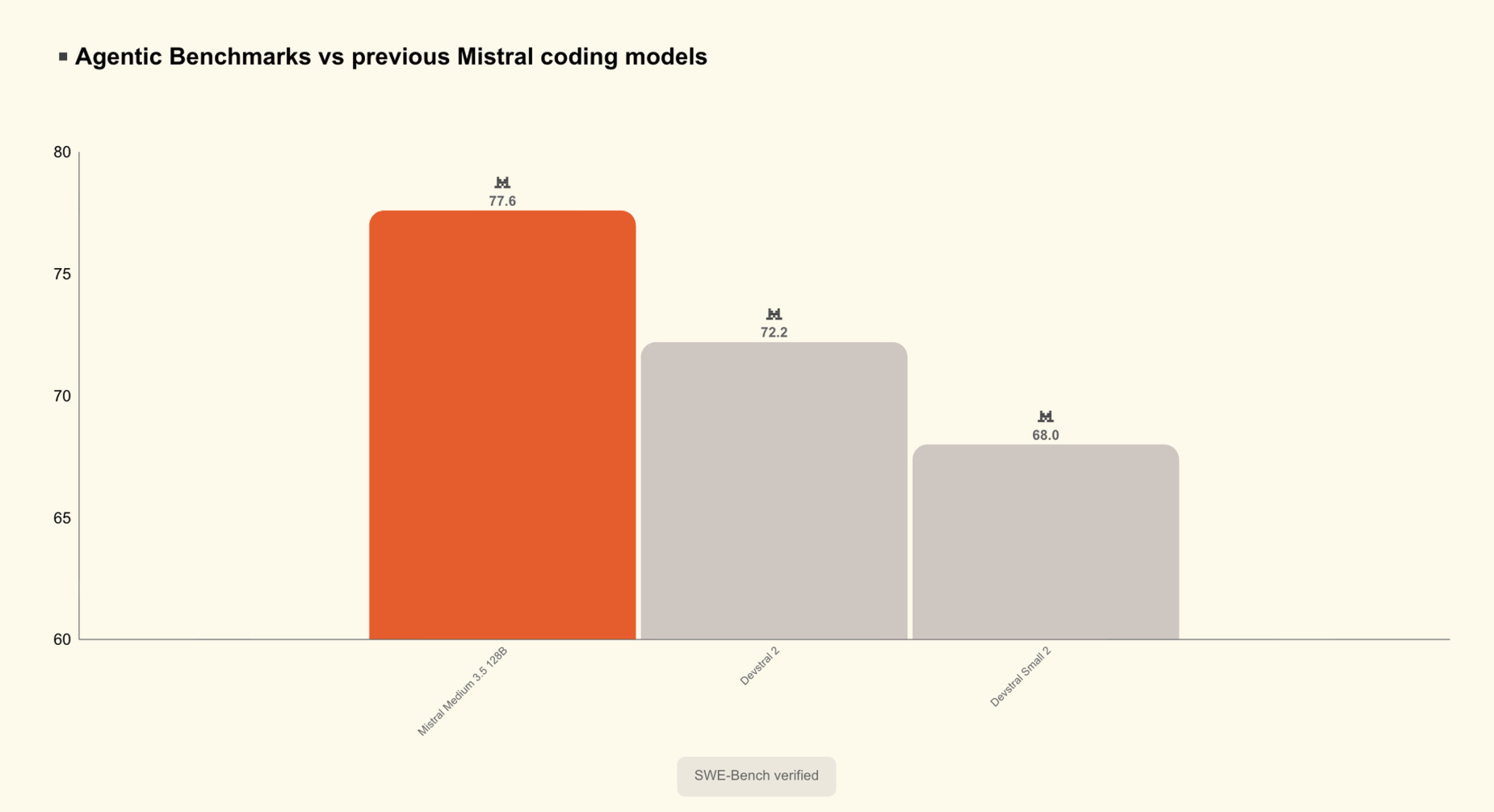

The new Mistral Medium 3.5 is a 128-billion parameter dense model that now serves as the default engine for both Vibe and Le Chat. With a 77.6% SWE-Bench Verified score, it represents a substantial improvement over its predecessor, particularly in software engineering tasks such as code generation, debugging, and test creation. The model’s dense architecture (as opposed to mixture-of-experts) ensures consistent performance across a wide range of inputs, making it reliable for production use.

Why This Matters for Coding Agents

A capable model is the foundation of any effective coding agent. Mistral Medium 3.5’s strong benchmark performance translates to fewer errors, better understanding of context, and more accurate code completions. Developers using Vibe with this model can expect smoother sessions, especially for complex refactoring or multi-step workflows. The public preview allows developers to test the model in real-world scenarios and provide feedback before a full release.

Practical Implications for Developers

These updates together shift the paradigm of AI-assisted coding from a supervised, local tool to an autonomous, cloud-based assistant. Developers can now deploy multiple agents to work on separate tasks simultaneously—for instance, one agent fixing a bug in a legacy module while another writes tests for a new feature—all without tying up their local machine. The ability to inspect agent actions via diffs and tool calls ensures transparency, while sandbox isolation prevents accidents.

For teams, the integration with project management and incident tracking tools means that Vibe becomes part of the existing workflow rather than a separate tool. A failed CI build can trigger an agent to investigate, propose a fix, and open a PR—all within the same chat thread.

Conclusion: A Step Toward Autonomous Development

Mistral AI’s latest offerings—remote coding agents and the improved Mistral Medium 3.5 model—represent a significant step toward making AI an autonomous partner in software development. By removing the need for constant oversight and tying the agent into existing development ecosystems, Mistral is pushing the boundaries of what open-source AI can do in practical, day-to-day coding tasks. Developers interested in trying these features can start with the Vibe CLI or Le Chat, and explore the public preview of the new model. As the field of coding agents matures, Mistral’s approach—grounded in an orchestration layer and a powerful dense model—offers a solid foundation for building the next generation of developer tools.