Overview

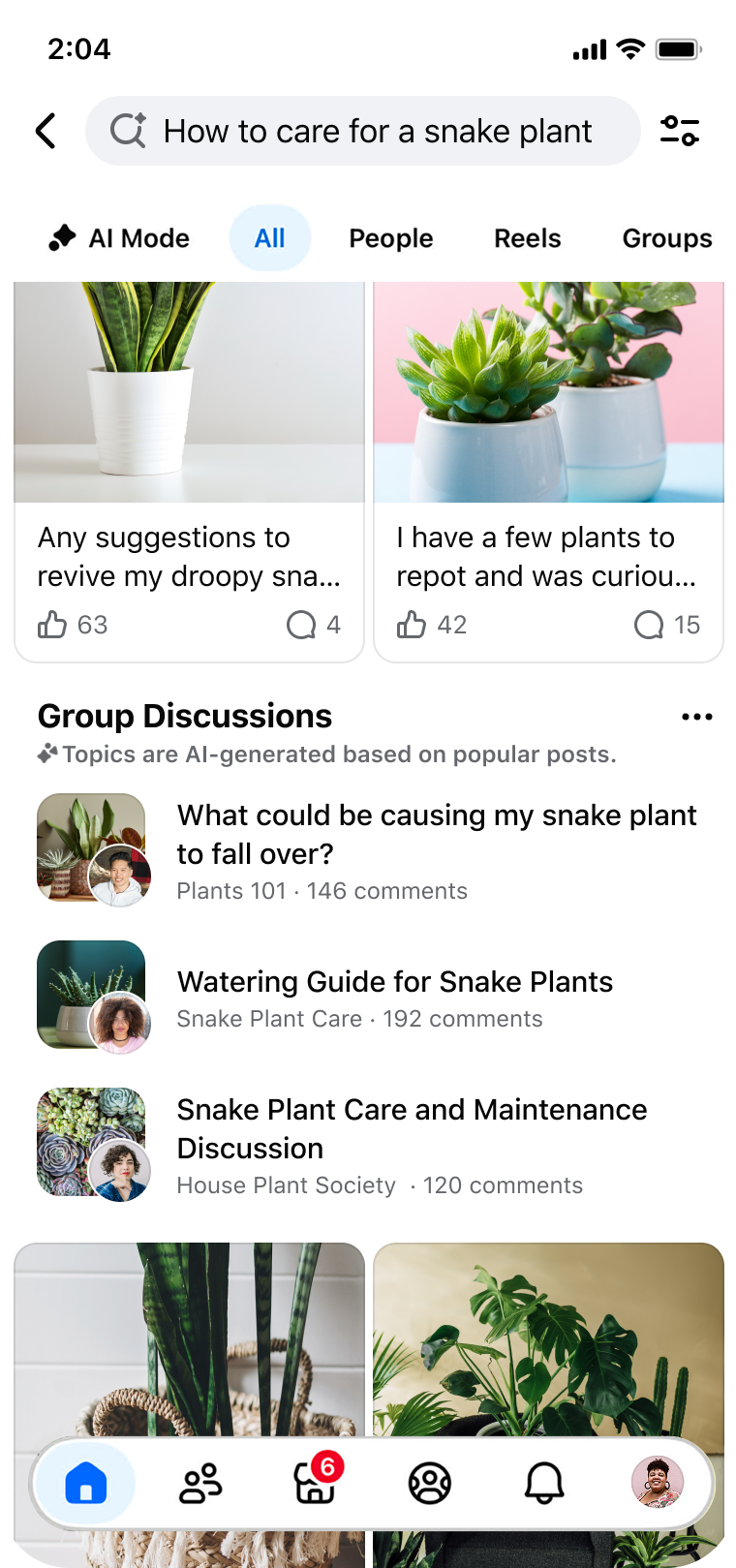

Modernizing search within community platforms like Facebook Groups requires moving beyond simple keyword matching. This tutorial walks through the core technical decisions and implementation steps behind a hybrid retrieval architecture and automated model-based evaluation system—the same approach used to revolutionize Facebook Groups Search. You'll learn how to design, build, and evaluate a system that can understand user intent, surface relevant community content, and reduce the effort required to find reliable answers.

Prerequisites

Before diving in, ensure you have a basic understanding of:

- Information Retrieval concepts (e.g., BM25, TF-IDF, vector embeddings)

- Python programming (we'll use libraries like

sentence-transformers,faiss-cpu, andscikit-learn) - Natural Language Processing basics (tokenization, embedding models)

- Machine Learning evaluation (metrics like precision, recall, NDCG)

You'll also need a dataset of community posts and comments. For this guide, we assume a simple JSON format where each document has fields: id, title, body, and tags.

Step-by-Step Instructions

1. Analyze Friction Points

The three key friction points in community search are discovery, consumption, and validation. Understanding these shapes your architectural choices.

- Discovery: Traditional lexical search fails when user query wording doesn't match community vocabulary (e.g., searching 'small cakes with frosting' for posts about 'cupcakes').

- Consumption: Even when relevant posts are found, users must scroll through many comments to extract answers—what we call the 'effort tax'.

- Validation: Users seek trusted opinions before making decisions (e.g., buying a vintage car on Marketplace), but relevant discussions are scattered across groups.

Your system must address all three. We'll tackle discovery with hybrid retrieval, consumption with snippet extraction, and validation with ranking based on community signals.

2. Design a Hybrid Retrieval Architecture

Instead of using only keyword matching or only semantic search, combine both for robust results. The architecture has two branches:

- Lexical Branch: Use BM25 (or Elasticsearch) for exact phrase matching, handling rare queries well.

- Semantic Branch: Use dense vector embeddings (e.g., from

all-MiniLM-L6-v2) to capture meaning even when words differ.

At query time, retrieve the top K results from each branch and merge via reciprocal rank fusion (RRF) or a learned model. Below is a Python implementation outline.

from sentence_transformers import SentenceTransformer

import faiss

import numpy as np

from rank_bm25 import BM25Okapi

# Load models and index

embedder = SentenceTransformer('all-MiniLM-L6-v2')

# Assuming you have a list of docs (each with 'id', 'text')

texts = [doc['text'] for doc in documents]

# Lexical: BM25

tokenized_corpus = [doc.split() for doc in texts]

bm25 = BM25Okapi(tokenized_corpus)

# Semantic: FAISS index

embeddings = embedder.encode(texts, convert_to_numpy=True)

dimension = embeddings.shape[1]

index = faiss.IndexFlatIP(dimension) # Inner product for cosine similarity if normalized

faiss.normalize_L2(embeddings)

index.add(embeddings)

def hybrid_search(query, top_k=10, alpha=0.5):

# Lexical scores

tokenized_query = query.split()

lexical_scores = bm25.get_scores(tokenized_query)

# Semantic scores

query_emb = embedder.encode([query])

faiss.normalize_L2(query_emb)

semantic_scores, _ = index.search(query_emb, len(texts)) # returns distances

# Combine (normalize scores first)

lexical_norm = (lexical_scores - np.min(lexical_scores)) / (np.max(lexical_scores) - np.min(lexical_scores))

semantic_norm = semantic_scores[0] # already normalized by cosine

combined = alpha * lexical_norm + (1 - alpha) * semantic_norm

# Return top_k indices

top_indices = np.argsort(combined)[-top_k:][::-1]

return top_indices3. Implement Automated Model-Based Evaluation

Manual evaluation is slow and subjective. Build an automated pipeline using a hold-out set of queries with human-annotated relevance judgments. Use metrics like NDCG@10, MRR, and Recall@20. The evaluation model can also be used to tune hyperparameters (like the combination weight alpha).

from sklearn.metrics import ndcg_score

# Example: compute NDCG for a single query

def evaluate_model(test_queries, ground_truth, model):

ndcg_scores = []

for query, relevant_docs in zip(test_queries, ground_truth):

retrieved_indices = model(query, top_k=10)

# Convert to binary relevance (1 if document is relevant else 0)

relevance = [1 if idx in relevant_docs else 0 for idx in retrieved_indices]

# For NDCG, need relevance scores from 0..k, here binary

# We'll use sklearn's ndcg_score which expects 2D array

# shape (n_queries, n_docs) - only one query here

true_relevance = np.array([relevance])

# Scores: e.g., the combined scores from model

scores = np.array([model_results[query].values()]) # placeholder

ndcg = ndcg_score(true_relevance, scores)

ndcg_scores.append(ndcg)

return np.mean(ndcg_scores)To gather training data, sample queries from search logs and ask raters to judge results. Use this to create a validation set for automated evaluation.

4. Reduce Consumption Effort with Snippets

After retrieval, extract the most relevant sentence or phrase from each post. Use a BERT-based extractive summarizer or simply highlight the part that matches the query. For example, if query is 'snake plant watering', the snippet might be 'Water when soil is dry, about every 2-3 weeks.'

You can use a pretrained model like distilbert-base-uncased fine-tuned for QA to find the answer span within a document.

from transformers import pipeline

qa_pipeline = pipeline("question-answering", model="distilbert-base-cased-distilled-squad")

def get_snippet(post_text, query):

result = qa_pipeline(question=query, context=post_text)

return result['answer']5. Enable Validation through Ranking Signals

For validation, incorporate community signals: number of likes, comments, comment stance (positive/negative), and user expertise. Train a lightweight ranker (e.g., XGBoost) that takes these features plus the combined retrieval score as input to produce final ranking. This helps surface authoritative answers.

Common Mistakes

Over-relying on One Retrieval Method

Relying purely on lexical search fails on synonymy; purely semantic search fails on rare terms (e.g., 'vintage Corvette 427'). Always combine both.

Ignoring Query Understanding

Don't just embed the query as-is. Expand queries with synonyms or use query reformulation (e.g., from 'cappuccino' to 'Italian coffee drink'). Simple strategies like adding WordNet synonyms can improve recall.

Skipping Real-World Evaluation

Metrics on a static dataset don't capture user satisfaction. Always run A/B tests with real users. Are users clicking more? Spending less time per session? Engagement is the ultimate metric.

Neglecting Latency

Semantic search with large models can be slow. Use approximate nearest neighbor (ANN) indexes (e.g., FAISS IVF) and cache frequent queries. Keep index updates asynchronous to avoid blocking.

Summary

Modernizing community search involves tackling three pain points: discovery (hybrid lexical+semantic retrieval), consumption (snippet extraction), and validation (ranking with community signals). By implementing a hybrid architecture and automated evaluation, you can significantly improve relevance without increasing error rates. Start with a small dataset, iterate based on evaluation metrics, and always validate with user feedback. The result is a search that truly unlocks the power of community knowledge.